Recently we needed to determine if certain URLs in one of our older .asmx services were still in use. The best way for us to do this was to review the IIS logs for our site so that we could determine if there were any hits against the given pages. However, once a took a look through one of our large text log files, I quickly realized that the complexity of the log file was a lot more than I had anticipated and that I was going to need a utility to help me.

After reviewing the log files I realized I had a few additional issues:

- I needed to check the logs for multiple days across multiple servers that are in our load balance pool. We have four servers in our load balance pool and I wanted to search these logs for three days. This meant twelve IIS log files. I’m not sure if you’ve looked at an IIS log file lately, but they are not extremely easy to read or search in a standard text editor.

- We use a tool to monitor our production sites to make sure they are online and alert us when they are having issues. The monitoring utility pings different pages on the site with an http request on a regular polling frequency to make sure they are up and responding correctly. These requests end up in the log and of course are not important for my check of consumers of the web services. Luckily we add a querystring to the query string on these requests just so that we can filter them out of the logs. So if we find “monitor=1” in the query string then we know it should not be counted when determining usage patterns. I needed a way to filter these requests out of the resultset when looking for valid consumers of the web service.

IIS Log Parser to the rescue! After doing a little bit of searching around I came across this command line tool that allows you to query your log files with a SQL style syntax. Here is the query I was able to use to search for any occurrences of my service above:

PS C:\temp\logs> logparser -i:W3C “select count(*) from *.log where cs-uri-query not like ‘%monitor%’ and cs-uri-stem like ‘%20100728%'”

For someone who is familiar with SQL syntax this utility is extremely easy to use. The other thing to note about the query is how easy the tool is allowing me to aggregate all of the files. Notice the *.log in the query? That just means search all of the files in the current directory with an extension of .log. All I had to do was drop in the twelve log files in that directory and start searching.

The search was also extremely quick… At the end of the query, log parser shows the results along with the number of elements processed and execution time. In this case it searched 372,195 elements in 1.58 seconds.

I found some great query examples on the IIS Log Parser page on the IIS site and on Mark Lichtenberg’s blog.

When I first looked at this tool on the IIS site I was a little skeptical because it hadn’t been updated since 2005. I gave it a shot anyway and am I really glad I did… save me a ton of time! I guess the log file formats just don’t change that often.

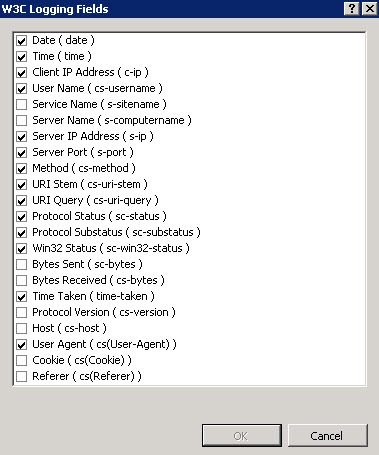

One last parting tip… I was able to find my log file format in IIS->Web Site Node->Logging.

Also, if you click on select fields you can get a nice list of all the fields IIS is logging to the file. This is really useful for figuring out the field names for your queries against IIS Log Parser.

Happy log searching!

This really is a great tool. I installed the Log Parser .msi, but also had to add the .exe path to my PATH variable to get it to work from the command line.